A Harness for LLM Chess: Board State and Context Handling

LLMs are terrible at chess — but mostly because we’re prompting them badly. I built a system that gives them memory, structure, and legal move enforcement. Then I made them play each other.I built a harness for LLMs to play each other in Chess. I was watching Levy (GothamChess)'s video ChatGPT Officially Solved Chess a few days ago - it was a good video. Its fun to watch all the ways the models screw up basic positions, make tactical errors, illegal moves and pull pieces out of the ether. I mean, how can you not like seeing Chatgpt suddenly summon a rook out of thin air or capture a piece *through* another piece? Hysterical!

But I also found myself thinking that a lot of the problems the models are having playing the game were really a context management and move validation problem underneath. So I thought it'd make sense to build a "harness" for the various LLM models to solve those problems.

I'd thought about this project a while back, but I didn't think it would be worth the effort to build out all the API connections, board representation, move validation, image generation, and then standing up a UI myself. But I paid for a month of Claude Code recently and thought it made sense to give it a try now.

Basic tech stack: Python backend with FastAPI (REST + WebSocket streaming), python-chess for board logic and move legality, provider integrations for OpenAI/Anthropic/Google/Copilot-style models, and a React frontend (Vite) for live game/tournament UI. Here's the repo link:

https://github.com/Fingolfin7/ChessHarness

The Project

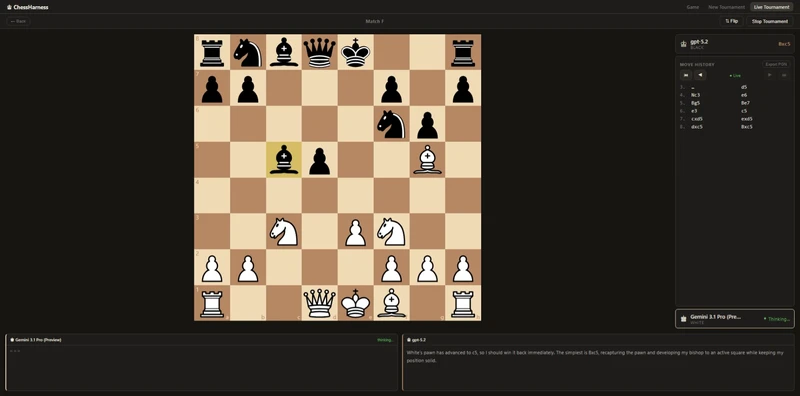

Chess Harness is basically an arena that sits between a chess engine (for rules and legality) and a bunch of LLMs (for move choice + the occasional comedy). You pick two models, give one White and one Black, and then it runs the loop: show the position ask for a move validate it apply it repeat.

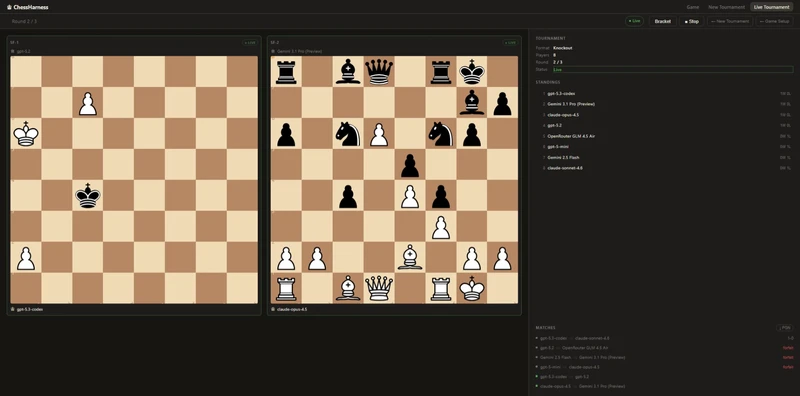

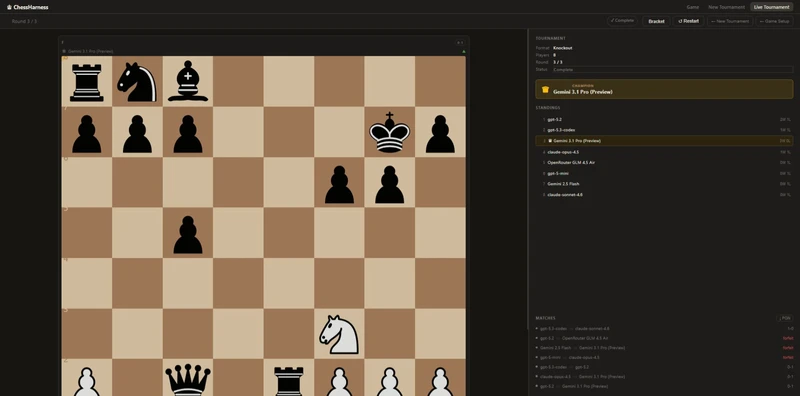

You can just run a single game, but the thing I actually wanted was the tournament: a knockout bracket where a bunch of models enter and you get to watch who survives. The UI has a live tournament view, standings, and ongoing matches.

The core problems with LLM Chess

When people say “LLMs are bad at chess,” it’s true in a very specific way. They’re not always losing because they missed a tactic or misjudged an endgame. A lot of the time they’re losing because they don’t even agree with reality. They’ll confidently describe a position that isn’t on the board, or respond with a move that would be great... if their queen hadn’t been captured three turns ago.

That’s why I kept coming back to the idea that the real issue is context. Humans don’t “remember the board state” in a fragile way; we’re literally looking at it. LLMs are being asked to reconstruct the board from a conversation transcript, which is like trying to play blindfold chess except your memory is a probabilistic autocomplete.

So the harness is mostly about doing the boring part properly: always provide the current board state, always constrain outputs into something parseable, and always have a rules layer that can say “nope, illegal” and make the model try again.

Giving each model a memory

One thing I was very intentional about is that each model gets its own internal conversational thread that persists for the entire game.

White doesn’t just get “current FEN move.” White gets the full history of its own prior reasoning. Same for Black. They don’t share a thread. They don’t see each other’s internal thoughts. But they do see their own previous plans, evaluations, and ideas.

That means if White says on move 8, “I’m building toward a kingside pawn storm,” that thought is still in its context on move 12. It doesn’t have to reinvent its strategy every turn. It can maintain continuity.

Without that, they are playing each move without any idea of what they were doing in the previous generation or what their plans were.

Once you give a model persistent internal memory (scoped correctly per player), the games start to feel less like random move generators and more like something attempting an actual plan.

Structured outputs

Each response is structured like this:

## Reasoning

...

## Move

...

At first this was just practical. It makes move extraction reliable. You don’t have to guess which of three candidate moves it “really meant.” The harness knows exactly where to look. It also allows you to surface the model’s reasoning directly in the UI by just pulling the "Reasoning" section.

So when you’re watching a game, you don’t just see 1...Nf6.

You see:

“Black aims to challenge the center and prepare ...e5.”

And then three moves later you can watch it completely abandon that plan and hang a rook.

That same reasoning can be embedded into annotated PGNs too. So instead of a dry game score, you get a record of what the model thought was happening at each step.

It turns the project from “LLMs playing chess” into “LLMs explaining their delusions in real time.”

Conclusion

The repo is below. I've been running tournaments for a few days now and the models still surprise me. It fascinating to watch how they reason through positions and come up with ideas. More often with delusional confidence that sends me straight to the logs just to confirm if they had actually gotten a copy of the current board state... lol.

A pattern I've noticed: Gemini models consistently outperform the rest. My current theory is spatial reasoning — they're somehow better at holding the board in working memory. Could be wrong. Worth testing more.

I'd love your thoughts. Memory architecture, structured output, tournament flow, whatever catches your eye. And if you end up starring it, I won't complain. Stars are nice. :)

Might build this into a proper benchmark someday. For now, it's just fun to watch.

You may also like

CM HGabor

CM HGaborHow titled players lie to you

This post is a word of warning for the average club player. As the chess world is becoming increasin… Tobs40

Tobs40Why a reachable position can have at most 218 playable moves

I hope that the title is unambiguous enough now and I wholeheartedly apologize to all the people who… thibault

thibaultHow I started building Lichess

I get this question sometimes. How did you decide to make a chess server? The truth is, I didn't. ChessMonitor_Stats

ChessMonitor_Stats